A f x x 1 2 x 1 x 2 2 x 2 2 2 x 1 e x 1 x 2 check if the direction d 12 at point 0 0 is a descent direction for the function f.

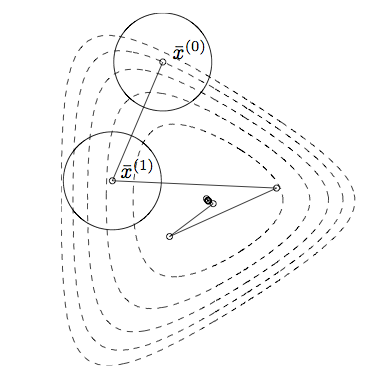

Example of descent direction. Site direction rfa is the direction of steepest descent. Modified Newton Direction. 4Update the current point x k1 x k p k.

Otherwise compute the normalized search direction to p k gx kkgx kk. The function value at the starting point is. The reader will recall that is a descent direction for f at xk if.

A classic example is of course ordinary gradient ascent whose search direction is simply the gradient. Use the steepest descent direction to search for the minimum for 2 f xx1225x1x2 starting at x0 13T with a step size of α5. The gradient of this function is rf f x.

In particular r fx is a descent direction if nonzero. The direction of steepest descent for x f x at any point is dc or dc 2 Example. Descent directions for example CG-method BFGS or its limited memory version l-BFGS.

Slow and resource-demanding algorithm. Example We apply the Method of Steepest Descent to the function fxy 4x2 4xy 2y2 with initial point x 0 23. Diagonally Scaled Steepest Descent.

Gradient Descent - Multiple Variables Example. R2R given by fxy 1 2 p 16 24x2 y. Faster and uses less resources than Batch Gradient descent.