Web the easiest way is to use numpy.polyfit to fit a 1st degree polinomial:

How to draw best fit line in linear regression python. Web how to implement linear regression in python, step by step free bonus: First, we need to find the parameters of the line that makes it the best fit. We will be doing it by applying the vectorization concept of linear algebra.

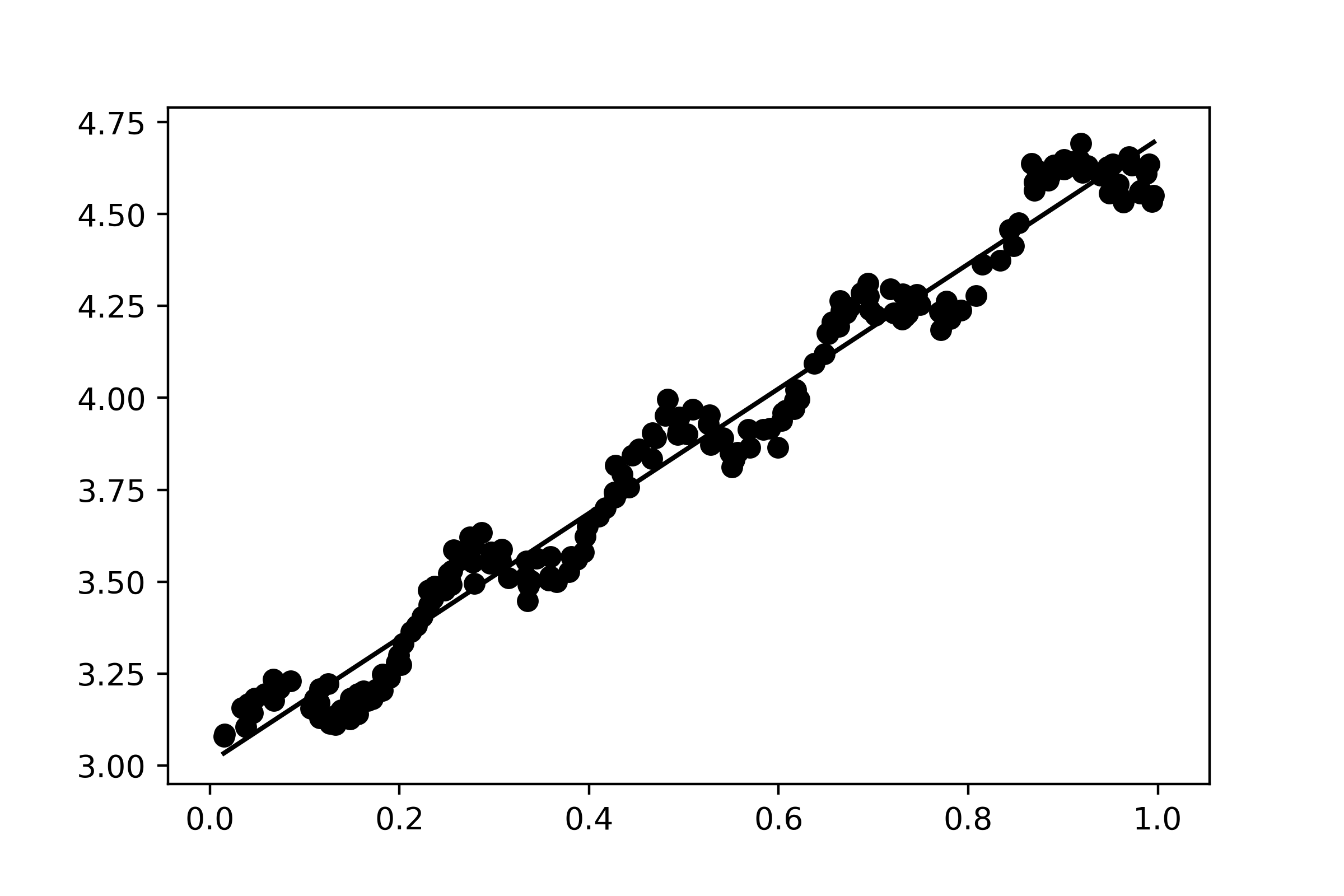

Because the yhat opposed to x before sorting will not remain the same after sorting. Click here to get access to a free numpy resources guide that points you to the best tutorials, videos, and books for improving your numpy skills. Web import matplotlib.pyplot as plt #create basic scatterplot plt.plot (x, y, 'o') #obtain m (slope) and b (intercept) of linear regression line m, b = np.polyfit (x, y, 1) #add linear regression line to scatterplot plt.plot (x, m*x+b) feel free to modify the colors of the graph as you’d like.

Plot (x, a*x+b) the following example shows how to use this syntax in practice. The file i am opening contains two columns. If i sort the x array & the yhat array separately, the data is changing.

#find line of best fit a, b = np. This one will be a bit easier than the slope was. (i.e a value of x not present in a dataset) this line is called a regression line.

P = numpy.polyfit (mjd, dm, deg=1) p will be a list containing the intercept and the slope of the fit line. Web the sklearn.linearregression.fit takes two arguments. Web now, the task is to find a line that fits best in the above scatter plot so that we can predict the response for any new feature values.

Test your knowledge with our interactive “linear regression in python” quiz. In the simplest invocation, both functions draw a scatterplot of two variables, x and y, and then fit the regression model y ~ x and plot the resulting regression line and a 95% confidence interval for that regression: This page is part of the documentation for version 3 of plotly.py, which is not the most recent version.